Container is the new process and Kubernetes is the new Unix.

Ashish Pandey

Ashish Pandey

Containers are pretty lightweight and are leveraged to package and deploy microservices. [my previous blog on containers]

Once a microservice is deployed in a container it shall be scheduled and scaled independently. But when you are talking about hundreds of microservices doing that manually would be inefficient.

This is where container orchestration tools kubernetes come into the picture. Kubernetes takes care of the scheduling, controlling and scaling these microservices by orchestrating the underlying containers across a cluster of nodes.

So containers are to kubernetes what processes are to Unix, albeit in a larger and distributed cluster of servers.

Kubernetes makes the utility of containers multifold and eases its management on a larger scale.

Kubernetes was developed by Google over the years to manage containers and services of Google-scale. It is designed to orchestrate any type of container including Docker, Rkt, Lxd and many more. With its container runtime interface (CRI) it does not matter what type of container is being used underneath.

Pods of kubernetes encapsulate over the container runtime. A Pod is the smallest unit for scheduling and scaling.

Multiple pods of the same type or different type group together to make a service.

Why do you need Kubernetes?

Before we discuss that, let's see what are the main objective while creating a modern app. Though the matter is pretty subjective and the answer can differ based on which domain you come from. This subjectivity can be minimised by taking a look at the 12-factor app which is the de-facto standard to create a modern web app. Refer to this awesome site to know more about the 12-factor app.

Kubernetes helps you achieve a lot of the objectives suggested by the 12-factor app.

For example, it not only encourages version control of codebase for various environment and version control of your configurations as well. It eases the admin processes, enables concurrency, provides disposability, isolates dependencies, binds services with logical port-binding and supports the use of stateless processes.

From the Ops perspective, Kubernetes fits the bill of a good orchestrator of the services/apps.

Various components and objects such as pods, services, replication controllers are defined in YAML files, which is human-readable as well as machine-readable.

Being an open source technology it can be repackaged to sell as a commercial application.

Open-source leader Redhat is using kubernetes to create Openshift just like it uses Unix to create enterprises Linux.

Openshift is using kubernetes for container orchestration along with extra customised features for container security, image management and Application lifecycle management.

Having said that, Kubernetes not only is a container orchestration tool but also a cluster management tool. The containers are launched over a cluster of host machines, the hosts can be added, removed or replaced anytime without any downtime for the end-users.

This makes Kubernetes one of the favourite orchestrator tool for cloud service providers.

It has been hosted as a service by almost all the major public and private cloud service providers. It is hosted as Elastic Kubernetes Services (EKS) by AWS, Azure Kubernetes Service (AKS) by Microsoft Azure, Google Kubernetes Engine (GKE) from Google, VMware Kubernetes Engine (VKE) from VMware and dozens of more providers.

These are by the way just a few examples, to know the full list of the vendors who have hosted kubernetes and then reselling it with their product you can click here.

Kubernetes has been already declared as a graduate project from Cloud Native Compute Foundation (CNCF), which means it is production ready. Enormous work is being done by the tools that will integrate and add more capabilities to kubernetes. Click here to know more.

With tools like Prometheus for monitoring, Envoy for Service Proxy and Helm as Package manager CNCF is accelerating the growth and adoption of the kubernetes platform .. and it is just getting warmed-up.

Keywords : DevOps kubernetes containers docker training kubernetes-training

Recommended Reading

Why Go is the best programming language for System Programmers?

Go is a compiled programming language developed by Google. Robert Griesemer, Rob Pike, and Ken Thompson were the minds behind Go. These 3 people designed Golang at Google. Google first designed Golang in the year 2007. It is also known as Golang that is tak...

Get Kubernetes Certified

Kubernetes is increasingly becoming the de-facto standard for container-orchestration. It is used for deploying and managing microservice-based applications. It also helps in scaling and maintaining as well. It is open-source software that was initially rel...

What is Helm in Kubernetes?

In this article, we would be discussing what Helm is and how it is used for the simple deployment of applications in the Kubernetes network. Continue reading to learn more about Helm in Kubernetes.

How to install Kubernetes Clusters Using Terraform?

Kubernetes is a container orchestration platform that can be used to deploy and manage a containerized applications. Generally, Microservices-based applications are first converted into Docker (or other container runtimes) images and then these microservice...

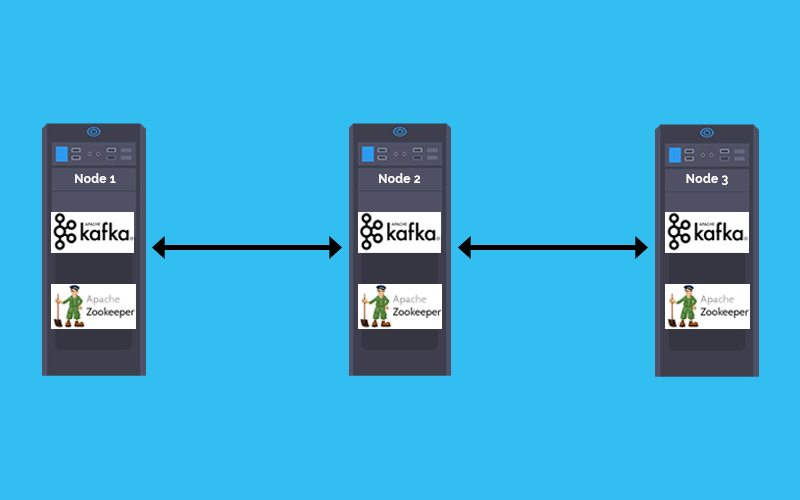

How to deploy Kafka and Zookeeper cluster on Kubernetes

In a Microservices based architecture message -broker plays a crucial role in inter-service communication. The combination of Kafka and zookeeper is one of the most popular message broker. This tutorial explains how to Deploy Kafka and zookeeper stateful se...

How to create a Docker Container Registry

Running a Local Docker Registry One of the most frequently asked question by developers is, how to set up a container registry for storing the Docker images?

How to install Kubernetes Cluster on AWS EC2 instances

When someone begins learning Kubernetes, the first challenge is to setup the kubernetes cluster. Most of the online tutorials take help of virtual boxes and minikubes, which are good to begin with but have a lot of limitations. This article will guide you t...

Why Learning Docker Containers is so important in IT industry?

Containerization/Dockerization is a way of packaging your application and run it in an isolated manner so that it does not matter in which environment it is being deployed. In the DevOps ecosystem containers are being used at large scale. and this is just...

zekeLabs among Top 10 destinations to learn AI & Machine Learning

Artificial Intelligence course from zekeLabs has been featured as one of the Top-10 AI courses in a recent study by Analytics India Magazine in 2018. Here is what makes our courses one of the best in the industry.

Top 3 Applications of Apache Spark

Distributed computation got with induction of Apache Spark in Big Data Space. Lightning performance, ease of integration, abstraction of inner complexity & programmable using Python, Scala, Java & R makes it one of the . Here we are discussing most widely ...

How can I become a data scientist from an absolute beginner level to an advanced level?

This is the question that every budding Data Scientist asks himself while starting on the tedious yet adventurous journey to the world of Data Science. Other than struggling with the factors like self-doubt the beginners look for the Effective Learning Path...